Intrinsic and Post-Hoc Explainability

for Anomaly Detection in Network Intrusion Detection Systems

2026-05-06 | Philipp Denzel

Slides

Outlook

- Recent developments in cybersecurity

- Challenges for Network Intrusion Detection Systems (NIDS)

- Benchmarks for AI-based NIDS?

- Our study

- Anomaly Transformer architecture

- Intrinsic explainability

- Post-hoc techniques

- Our future plans

Cybersecurity

Cybersecurity

is a cat-and-mouse game

Every system that stores, transmits, or processes data is a potential target. Attackers need to find only one gap, defenders must close them all.

And yet, the most damaging attacks often exploit the simplest mistakes.

Heartbleed was such a vulnerability,

cf. XKCD 1354: Heartbleed Explanation

Heartbleed

Heartbleed (CVE-2014-0160)

A missing length check in OpenSSL's heartbeat extension let any client request up to 64 KB of live server memory per packet; passwords, private keys, session tokens.

- ~500,000 servers affected

- est. $500M in remediation

No authentication required.

No entry in the logs.

Silent by design.

AI Raises the Stakes

New York Times; Credit: Samyukta Lakshmi/Bloomberg

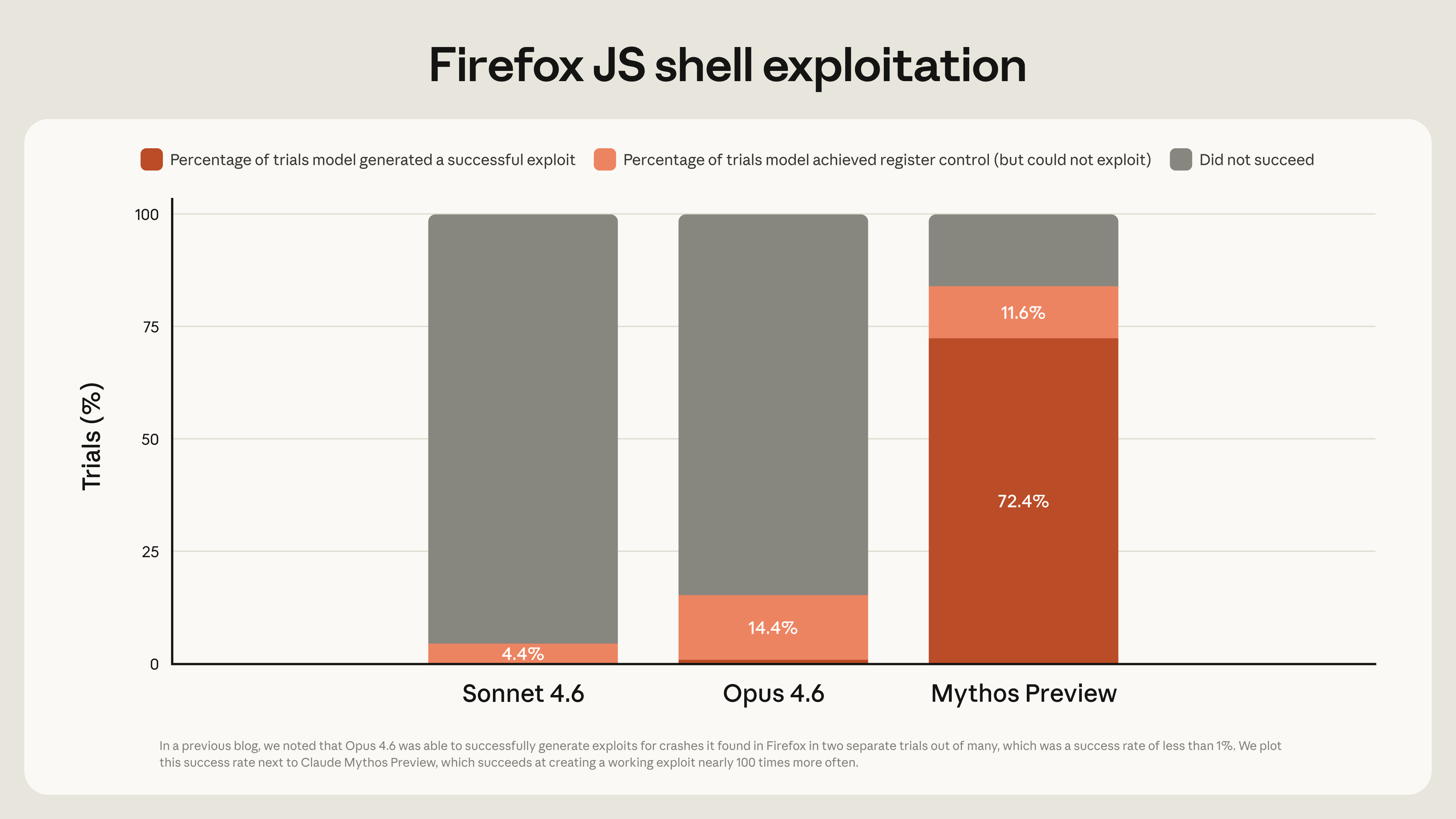

AI as Offensive Weapon

In April 2026, Anthropic withheld Claude Mythos Preview from public release due to its cybersecurity capabilities, the first time a leading AI lab did so.

Key findings from the Anthropic Red Team:

- Zero-days in every major OS and browser

- A 27-year-old OpenBSD bug, exploited fully autonomously

- successful Firefox exploit trials:

Opus 4.6 ×2 → Mythos Preview ×181

The window between discovery and exploit has collapsed.

Source: Anthropic Red Team Blog, April 7 2026

Detection is Not Enough

Network intrusion detection systems (NIDS)

We can detect anomalies.

But can we explain them?

The Black-Box Problem

Intrusion Detection Systems are blind

Modern ML-based IDS achieve high accuracy but produce no justification for their decisions.

What analysts need

- Why was this flagged?

- Which features drove the alert?

- Is this a false positive?

- What action should I take?

What they get

- anomaly score: 0.94

- label: malicious

- confidence: high

A system analysts cannot interpret is useless.

Our Approach

Models with inherent explainability potential

Our approach

- Transformer-based anomaly detector AnomalyTransformer (AT) trained on

network flow data - evaluated on the BCCC-CIC-IDS-2017 (revised) benchmark (114 features).

Two complementary explanation tracks:

- Intrinsic: attention weights from the model

- Post-hoc: KernelSHAP for time-series data

What this gives analysts

- Feature-level evidence per alert

- Signals from two independent XAI methods

- A basis for incident report writing

- A path to auditable decisions

Accuracy is necessary.

Explainability is what makes a system deployable.

Benchmark dataset

BCCC-CIC-IDS-2017 Benchmark

Realistic NIDS datasets are rare most are synthetic, outdated, or contain labelling errors.

Why this dataset?

The revised BCCC-CIC-IDS-2017 version fixes mislabelled flows and removes ground-truth leakage from the widely-used CIC-IDS-2017, enabling fair evaluation.

114 features derived from raw network flows,

no payload inspection required.

Attack categories

- DoS / DDoS

- Botnet

- Port scanning

- Brute force (web access, SSH/SFTP)

- Web attacks (SQL injection, XSS)

- Exploitation (Heartbleed, infiltration)

Highly imbalanced, benign traffic dominates.

Realistic class distribution is preserved.

Model Architecture

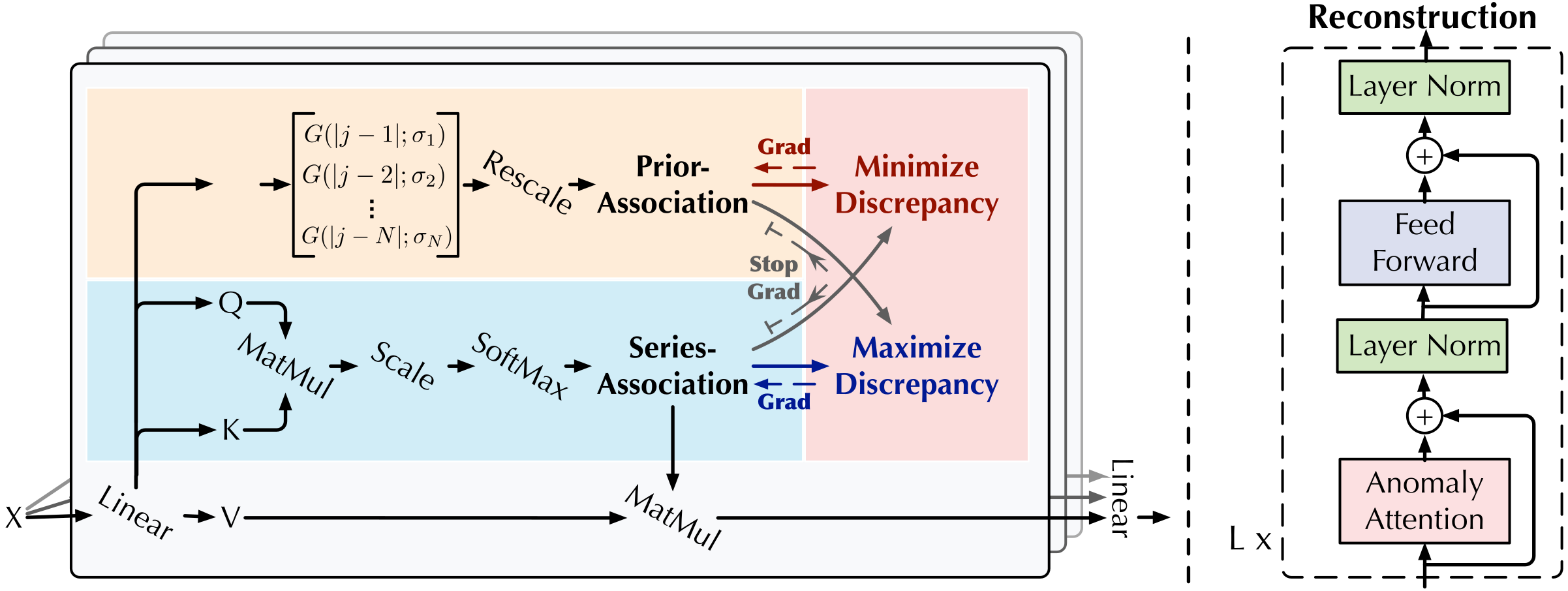

Transformer-Based Anomaly Detection

Figure 1: Xu et al. (2022)

Performance comparison

Performance comparison

| Model | Accuracy | Precision | Recall | F1-score | AUROC |

|---|---|---|---|---|---|

| Anomaly Transformer | 0.972 | 0.987 | 0.960 | 0.973 | 0.970 |

| TimesNet | 0.859 | 0.955 | 0.772 | 0.854 | 0.919 |

| Isolation Forest | 0.565 | 0.552 | 0.992 | 0.709 | - |

- Unsupervised training with high class imbalance

- Isolation Forest catches almost all anomalies but creates an overload of false positives for analysts

- AT has strong recall compared to TimesNet (SOTA CNN-based anomaly detector)

- AT performs consistent across all types of attacks (even unseen anomaly types)

- Other benchmarks yield similar results

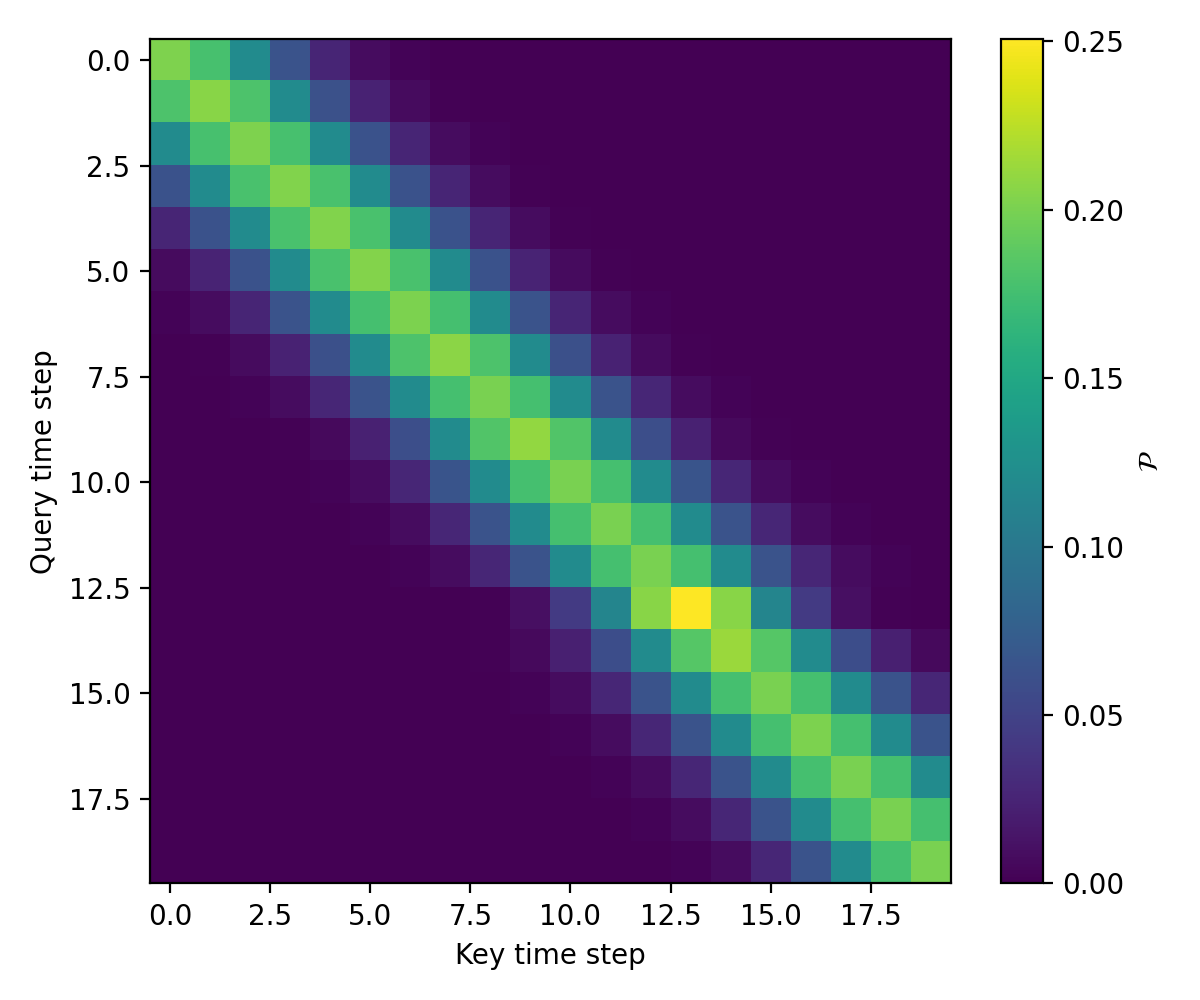

Dual-Track Intrinsic Explainability

Dual-Track Intrinsic Explanations

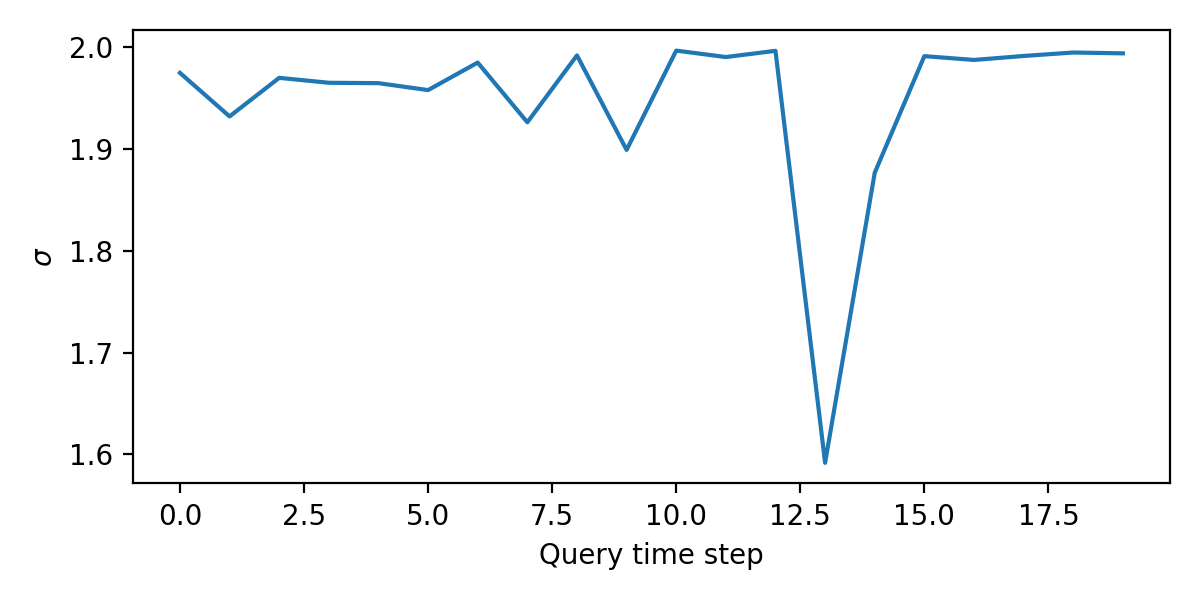

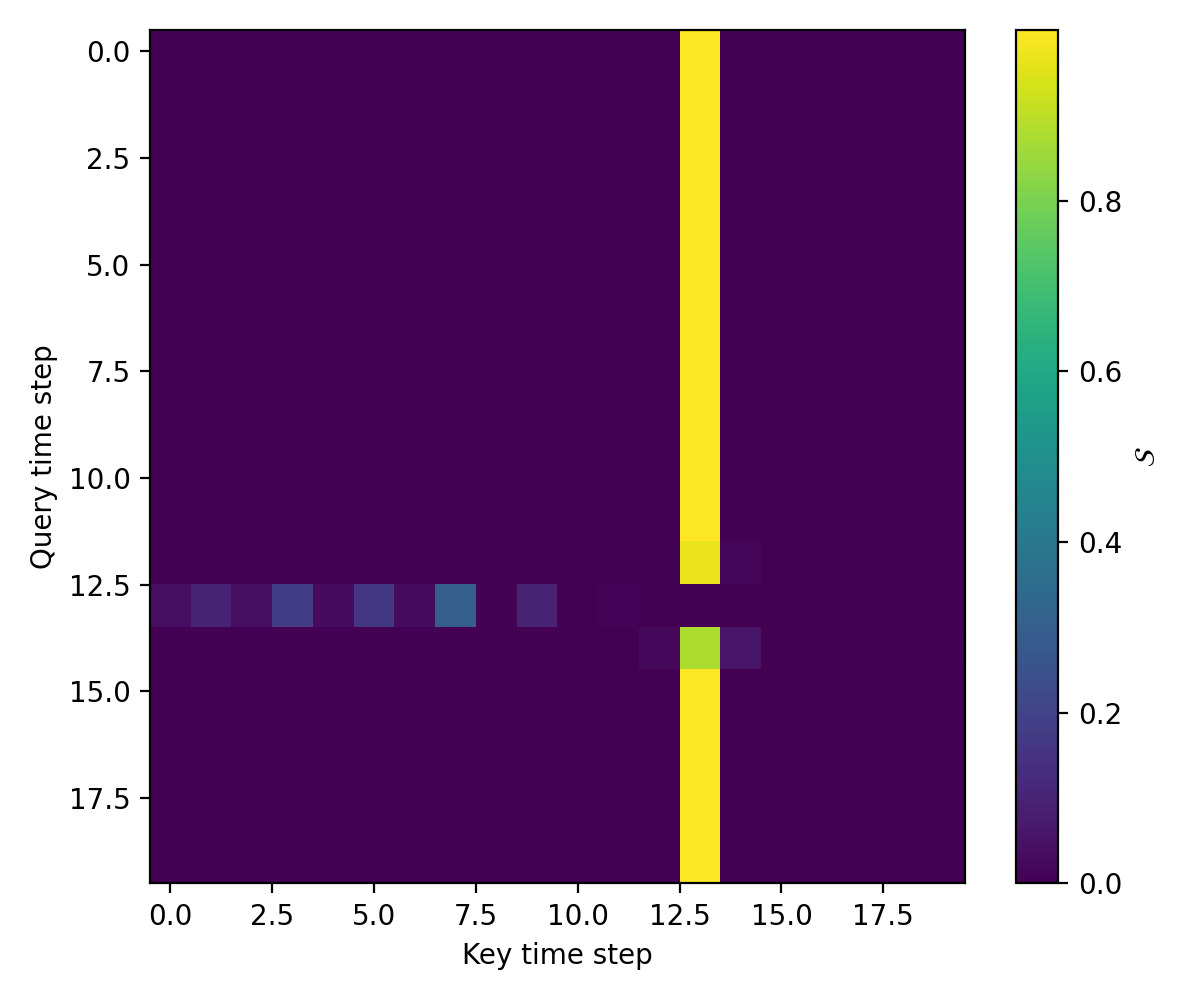

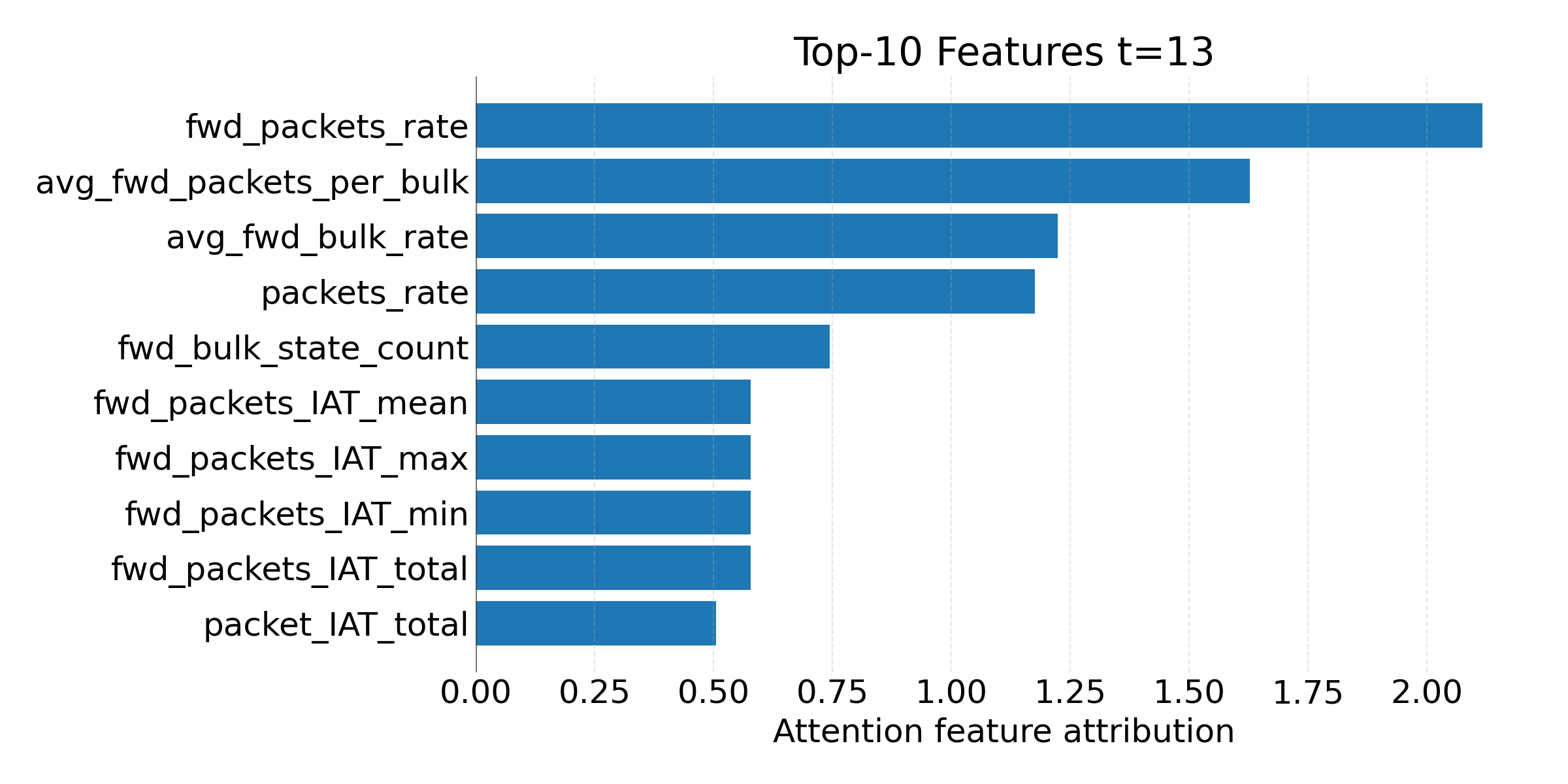

Prior association heatmap & σ-curve

The Gaussian prior narrows at anomalous time steps; a sharper diagonal peak signals concentrated local associations, characteristic of anomalies. A dip in σ at step 13 is a concise, unambiguous diagnostic; available at zero additional cost.

Attention map

Row-wise weights reveal temporal precursors, earlier events that contributed to triggering the anomaly. Column-wise bright stripes mark the anomalous step as globally salient.

Complementary Explainability Modalities

Two Complementary Explanation Modalities

Intrinsic

Prior association heatmaps and σ-curves localize when in the window the anomaly occurs. Attention feature attribution reveals which features the model focused on at that time step.

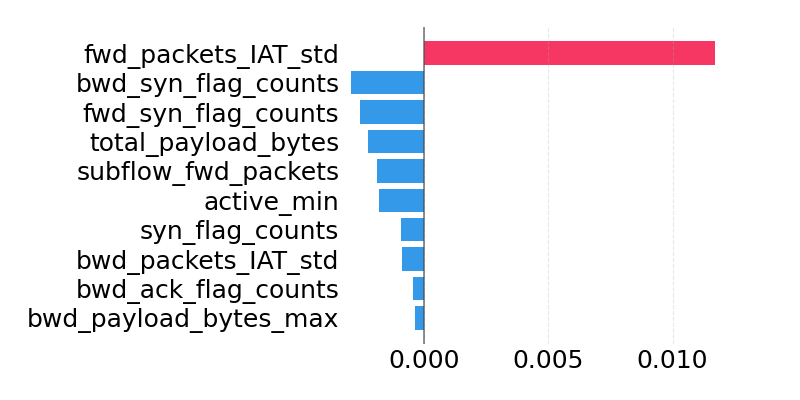

Post-hoc

Time-aware KernelSHAP answers: "Which features, if absent, would most reduce the anomaly score?" Emphasizes packet statistics and flag families

We observed minimal overlap between the two feature sets. Relying on a single modality is insufficient.

Implications for Deployment

Towards Auditable IDS

What analysts gain

Temporal localization: intrinsic explanations pinpoint when in the traffic window the anomaly occurred, supporting rapid triage.

Feature-level audit trail: SHAP attributions identify which raw inputs drove the score, aligned with domain expertise and attack signatures.

Model validation: when both tracks agree, confidence is high. When they diverge, the divergence is itself informative.

Recommended workflow

- Intrinsic σ-curve flags when to look

- Attention map reveals what preceded the event

- SHAP provides feature evidence for the incident report

No single modality is sufficient.

A multifaceted approach is essential for

operational trust, compliance, and auditability.

Future work

Open Directions

Ongoing

Broader benchmark: more realistic datasets for even better validation.

Testing on Locked Shields Partners Run 24 (and 23).

Preliminary results:

AT: F1 ~ 0.81

TimesNet: F1 ~ 0.72

Suricata integration: combining signature-based and anomaly-based detection in a single open-source IDS.

Near-term

Quantitative XAI metrics: fidelity and stability scores to evaluate explanation quality beyond qualitative comparison.

Lightweight SHAP: near-real-time approximations in live environment.

Adversarial robustness: test stability of detection under evasion attempts.

Longer-term

GNN-based IDS: topology-aware representations with node-level explanations mapped onto network itself.

Realtime application: empirical validation that dual-track explanations improve decision speed and accuracy in practice.

Summary

What we did

Evaluated the Anomaly Transformer for network intrusion detection on the corrected BCCC-CIC-IDS-2017 benchmark, outperforming CNN-based and classical baselines.

Introduced a dual-track explainability framework combining intrinsic attention signals with time-aware KernelSHAP attributions.

What we found

The two explanation modalities are complementary:

intrinsic mechanisms localize anomalies in time,

SHAP provides feature-level audit trails.

Relying on a single modality is insufficient.

A multifaceted approach is essential for

operational trust, compliance, and auditability.

Detection accuracy is necessary, but explainability is what makes a system deployable, auditable, and trustworthy.